AB Testing 101: A Comprehensive Guide

At ezbot, we've dug into the basics of AB testing before. If you're looking for a primer on what AB testing is and how it works, it's a great place to start.

We're creating this comprehensive guide because we believe that true empowerment comes through knowledge. While our platform makes optimization accessible, we're committed to making our customers experts in their own right - not just users of our tools. This guide represents our dedication to equipping you with the foundational understanding and strategic mindset needed to drive meaningful results through testing, regardless of your experience level.

Whether you're just starting your optimization journey or looking to refine your approach, this resource will serve as your roadmap to data-driven growth.

1. Introduction to AB Testing

AB testing, also known as split testing, is a methodology that compares two versions of a webpage, app, email, or other digital asset to determine which one performs better. It involves showing two variants (A and B) to different segments of users at the same time and measuring which variant drives more conversions, engagement, or other desired outcomes.

For businesses across all industries, AB testing has become an essential practice for growth and optimization. Rather than making changes based on gut feelings or assumptions, AB testing allows companies to make data-driven decisions that can significantly impact revenue, user experience, and customer satisfaction. This methodical approach to optimization eliminates guesswork and provides concrete evidence for why certain designs, copy, or features perform better than others.

The impact of AB testing can be seen across numerous key metrics that directly affect business success, including:

- Conversion rates (sales, sign-ups, downloads)

- Engagement metrics (time on site, page views, click-through rates)

- Revenue metrics (average order value, customer lifetime value)

- User experience metrics (bounce rates, form completions)

The practice of experimental testing has a rich history in marketing and product development. While traditional market research methods have existed for decades, digital AB testing as we know it today emerged in the early 2000s alongside the growth of e-commerce and digital marketing. Companies like Google, Amazon, and Facebook pioneered large-scale experimentation, sometimes running thousands of tests simultaneously. Today, AB testing has evolved from simple comparison of webpage elements to sophisticated multi-variant testing across platforms, often powered by machine learning algorithms that can analyze massive datasets and identify winning variations faster than ever before (like us at, ezbot.ai!).

2. The Scientific Method in AB Testing

At its core, effective AB testing is an application of the scientific method to business optimization. This structured approach begins with forming a hypothesis—a clear, testable prediction about how a specific change might improve performance. A strong hypothesis is specific, measurable, and based on both data insights and user behavior research. For example, rather than simply testing "a new button color," a proper hypothesis might state: "Changing our CTA button from blue to orange will increase click-through rates by 15% because orange creates more visual contrast on our page."

Creating a solid experiment design is essential for valid results. This includes determining which variables to test, ensuring that only one element changes between variants (unless conducting multivariate tests), and establishing proper control groups. Experiment design also involves deciding how traffic will be split between variants—typically 50/50 for simple AB tests, though different allocations may be appropriate in certain situations.

Understanding statistical significance is crucial for interpreting test results. Statistical significance indicates the probability that the difference between variants is not due to random chance. Most businesses aim for a 95% confidence level before declaring a test conclusive. Equally important is calculating the appropriate sample size needed for reliable results. Testing platforms often include calculators that determine how many visitors or conversions are needed based on your baseline conversion rate and the minimum detectable effect you want to measure.

Common statistical errors to avoid include stopping tests too early (before reaching statistical significance), misinterpreting correlation as causation, ignoring external factors that might influence results (like seasonality or marketing campaigns), and failing to segment data appropriately. The "peeking problem"—checking results continually and stopping as soon as significance is reached—is particularly problematic and can lead to false positives.

Read more on how we think about statistical significance at ezbot. While it is a critical tool, it can often be a crutch.

3. AB Testing Process Step-by-Step

A successful AB testing program follows a structured, cyclical process:

Setting clear goals and KPIs is the foundation of effective testing. Before designing any experiment, define exactly what success looks like. Are you trying to increase purchases, email sign-ups, or engagement metrics? Having specific, measurable outcomes ensures your test will provide actionable insights.

Research and ideation should inform your testing strategy. This includes analyzing quantitative data (analytics, heatmaps, session recordings) and qualitative data (user feedback, surveys, usability tests). Look for pain points, drop-offs in conversion funnels, and user behavior patterns that suggest opportunities for improvement.

Prioritizing test ideas is essential when resources are limited. Several frameworks can help, including ICE (Impact, Confidence, Ease), PIE (Potential, Importance, Ease), and others. These frameworks help ensure you're focusing on tests with the highest potential return on investment rather than simply testing random elements.

Creating test variations requires attention to design, copywriting, and technical implementation. Variations should be meaningfully different from the control while maintaining brand consistency. For meaningful results, focus on testing one element at a time unless using multivariate testing approaches.

Running the experiment involves setting up the test in your chosen platform, QA testing to ensure everything works properly, and letting the test run until it reaches statistical significance. The duration will depend on your traffic volume and conversion rates, but rushing tests can lead to unreliable results.

Analyzing results goes beyond simply declaring a winner. Dig into segments, examine secondary metrics that might be affected, and look for insights that could inform future tests. Sometimes the most valuable outcome is not the performance lift but the insights about user behavior.

Implementing winners means putting successful variations into production, documenting learning for the organization, and planning follow-up tests to further optimize. Remember that AB testing is not a one-time activity but an ongoing process of continuous improvement.

4. AB Testing Tools and Technology

The AB testing technology landscape offers numerous platforms with varying capabilities, pricing, and technical requirements. Popular tools include Growth Book, Optimizely, VWO (Visual Website Optimizer), AB Tasty, Convert, and many others. Enterprise-level solutions offer robust features for segmentation and personalization, while simpler tools might be sufficient for basic testing needs. When evaluating platforms, consider factors like ease of use, integration capabilities, statistical methods used, segmentation options, and reporting features.

Server-side vs. client-side testing represents two fundamentally different approaches to implementation. Client-side testing happens in the user's browser using JavaScript, making it easier to set up but potentially causing issues like flicker or affecting page load speed. Server-side testing occurs before the page is sent to the browser, eliminating these issues but requiring more technical resources to implement. Server-side is increasingly favored for more complex tests, personalization at scale, and testing that affects fundamental site functionality.

Integrating testing with analytics platforms like Google Analytics, Adobe Analytics, or Mixpanel allows for deeper analysis of test results and better understanding of how changes affect the entire user journey. Most testing tools offer direct integrations with popular analytics platforms, enabling you to view test data alongside other important metrics.

Testing approaches differ across platforms. Web testing has the longest history and most mature tools. Mobile app testing requires different implementations—either through native app AB testing SDKs or feature flags in the code. Email testing focuses on subject lines, content layout, and send times, while using specialized email marketing platforms. Each environment has unique constraints and opportunities that influence testing strategy.

5. AB Testing Strategy

Building a testing roadmap transforms AB testing from ad-hoc experiments to a structured program driving continuous improvement. An effective roadmap outlines testing themes aligned with business objectives, prioritizes high-impact areas, schedules tests based on available resources, and establishes clear success metrics. Roadmaps typically span 3-12 months, with more detailed plans for the immediate future and broader themes for later periods.

Creating a testing culture within an organization is often more challenging than the technical implementation. This involves educating stakeholders about testing benefits, sharing success stories, establishing processes for hypothesis generation and review, and celebrating both wins and valuable learning from unsuccessful tests. Executive sponsorship is crucial for embedding testing into company decision-making processes.

Test velocity and cadence vary widely depending on organizational resources, traffic volumes, and complexity of tests. High-traffic sites can run multiple concurrent tests, while lower-traffic sites might focus on sequential testing with longer durations. Finding the right balance is key—too few tests limits potential improvements, while too many simultaneous tests can create interaction effects that confound results.

Testing program maturity typically evolves through stages. Beginning programs focus on simple element testing and building infrastructure. Intermediate programs develop more sophisticated hypotheses, segment results, and coordinate across departments. Advanced programs implement personalization, predictive testing, and automated optimization, often leveraging machine learning to continually refine the user experience.

6. Advanced AB Testing Concepts

Segmentation and personalization represent the evolution from one-size-fits-all testing to targeted optimization. By analyzing how different user segments respond to variants, you can uncover insights that would remain hidden in aggregate data. For example, desktop users might prefer a different layout than mobile users, or returning customers might respond differently than first-time visitors. Personalization takes this further by delivering tailored experiences to specific segments, often automatically through algorithms that learn from user behavior patterns.

Sequential testing allows for testing multiple variants in succession, refining and improving with each iteration. Instead of simply comparing A versus B, sequential testing might test A versus B, then the winner against C, and so on. This approach is particularly valuable for optimizing complex pages or flows where multiple elements interact.

Bayesian vs. Frequentist statistical approaches represent different philosophies for analyzing test results. Traditional frequentist methods require predetermined sample sizes and fixed testing durations. Bayesian statistics, increasingly popular in testing platforms, allow for more flexible testing by continually updating the probability that a variation is better as data accumulates. This can enable faster decision-making, especially for tests with clear winners.

Multi-armed bandit testing uses machine learning algorithms to dynamically allocate traffic to better-performing variations during the test itself. Unlike traditional AB testing where traffic split remains fixed, multi-armed bandits shift more visitors to winning variations over time, maximizing conversions during the testing period. This approach balances "exploration" (gathering data about different variants) with "exploitation" (sending traffic to the best-performing option).

7. Testing by Element Type

Headlines and copy testing is among the highest-impact areas for optimization. The words you use directly communicate your value proposition and can dramatically affect user comprehension and emotional response. Testing can explore different messaging approaches (benefit-focused vs. feature-focused), tone variations (professional vs. conversational), and length (concise vs. detailed). Even small copy changes can yield significant conversion improvements.

CTA button testing focuses on one of the most crucial interactive elements on any page. Beyond the commonly tested color and size, effective button testing can explore text variations ("Buy Now" vs. "Get Started"), placement, shape, and surrounding elements. The button's relationship with nearby content and the clarity of what happens after clicking are equally important considerations.

Form optimization balances the business need for information with the user's desire for simplicity. Tests might compare single-page vs. multi-step forms, required field counts, field order, error handling, and inline validation. Form abandonment often represents a significant loss of potential conversions, making this area particularly valuable for testing.

Image and visual testing examines how different visual elements affect user behavior. This includes testing product photos (studio vs. lifestyle), hero images, icons, videos vs. static images, and the overall visual hierarchy. Visual elements create immediate emotional responses and set expectations about brand positioning and offerings.

Price and offer testing explores how different pricing strategies, discounts, or special offers impact conversion and revenue. This might include testing price points, displaying prices (with or without currency symbols, decimal places), subscription vs. one-time payment options, or various incentives like free shipping thresholds or bundle discounts.

Navigation and UX testing focuses on how users move through your site or app. Tests might compare different menu structures, search implementations, filtering options, or content organization schemes. The goal is to help users find what they're looking for with minimal friction, recognizing that navigation patterns significantly impact discovery and conversion.

For a simple guide on easy optimizations you can try today, check out 4 one-minute ecommerce optimizations from Griffin.

8. Testing by Page Type

Homepage testing strategies recognize the unique role this page plays as both a brand ambassador and navigation hub. Tests often focus on hero sections, value proposition clarity, featured content selection, and primary call-to-action placement. Because homepages typically serve diverse visitor segments with different needs, segmentation analysis is particularly valuable here.

Landing page optimization focuses on pages designed for conversion from specific traffic sources. Testing often explores headline-offer alignment, form length, social proof placement, and objection handling. Because landing pages typically have a single goal, they're ideal candidates for testing and can show dramatic improvements in conversion rates.

Product page testing aims to provide visitors with the information and confidence needed to make a purchase decision. Tests might compare different product image galleries, specification displays, pricing presentations, related product recommendations, and review implementations. The balance between comprehensive information and clean design is a common testing theme.

Checkout optimization addresses the critical final steps in e-commerce conversions. Tests often focus on reducing form fields, clarifying shipping and tax calculations, streamlining the payment process, and addressing common concerns that cause abandonment. Even small improvements in checkout completion can have significant revenue impacts.

Email testing extends beyond the website to examine how subject lines, preheader text, sender names, content layout, and call-to-action placement affect open rates and click-through rates. A/B testing capabilities are built into most email marketing platforms, making this an accessible entry point for organizations new to testing.

9. Common AB Testing Challenges and Solutions

Low traffic websites face particular challenges in reaching statistical significance within reasonable timeframes. Solutions include focusing on high-impact pages and conversion points, testing larger changes that might produce more substantial effects, using sequential testing approaches, and extending test durations. Some organizations with very low traffic may need to focus on qualitative research methods to supplement limited quantitative data. ezbot's unique AI capabilities make UX optimization possible where traditional experimental methods can't function; AI's, like humans, can make decisions quickly without statistical significance.

Long sales cycles complicate testing for B2B companies and high-consideration purchases. When conversion to final purchase might take weeks or months, testing can focus on micro-conversions (like content downloads or demo requests) that indicate progress through the funnel. Cohort analysis and CRM integration become particularly important for connecting early-funnel experiments to later-stage outcomes.

Legal and privacy concerns have grown with regulations like GDPR, CCPA, and other data protection frameworks. Ensuring testing programs comply with consent requirements, data minimization principles, and privacy policies is essential. This may involve working with legal teams to establish guidelines for what can be tested and how data can be collected and stored.

Technical implementation issues range from flicker effects (where original content briefly displays before test variations) to cross-device consistency and integration with existing systems. Solutions include proper use of asynchronous loading, server-side testing for critical elements, thorough QA processes, and close collaboration between marketing and development teams.

Organizational resistance often stems from concerns about the impact of testing on brand consistency, design integrity, or established processes. Building a testing culture requires education about the benefits of data-driven decision making, sharing success stories, involving stakeholders in the hypothesis generation process, and sometimes starting with smaller, less controversial tests to demonstrate value.

Finally, there may not be a single best version of any element of your website. A significant amount of research indicates we may need to start thinking in new ways about serving each user optimally.

For more information on the types of challenges the world's best experimentation teams are dealing with, you can check out this 2019 paper called, "Top Challenges from the first Practical Online Controlled Experiments Summit"

10. AB Testing Case Studies

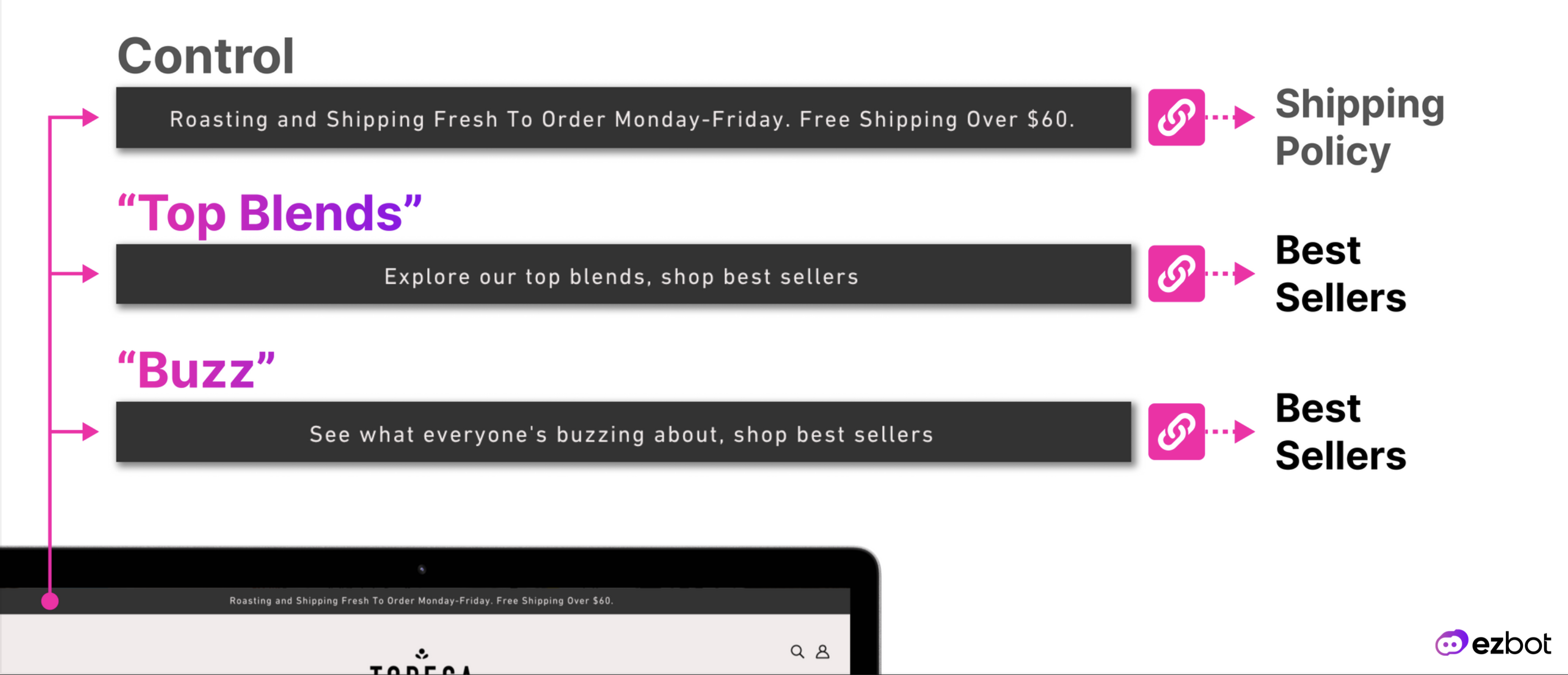

E-commerce success stories demonstrate how systematic testing can dramatically improve revenue metrics. Companies like Amazon, Shopify merchants, and niche retailers have used AB testing to optimize product imagery, refine recommendation algorithms, streamline checkout processes, and personalize shopping experiences. These optimizations often compound over time, with seemingly small conversion improvements translating to significant annual revenue increases. ezbot's capability to handle low traffic has generated some great case studies, like this one from Topeca Coffee Roasters.

SaaS conversion improvements through testing focus on optimizing user acquisition and activation. Case studies show how companies have tested different trial periods, feature highlighting approaches, onboarding sequences, and pricing presentations to increase both sign-up rates and subsequent conversions to paid plans. The subscription model of SaaS makes customer acquisition costs and lifetime value particularly important metrics to optimize.

B2B lead generation wins demonstrate how testing can improve even complex, high-consideration purchase journeys. Successful B2B testing often focuses on qualifying leads more effectively, providing the right content for different buyer personas, optimizing form strategies, and refining nurture sequences. These tests may measure quality of leads rather than just quantity, recognizing that sales cycle length and deal size are crucial metrics.

Mobile optimization examples show how testing can address the unique constraints and opportunities of smaller screens and touch interfaces. Successful mobile tests have explored navigation patterns (hamburger menus vs. tab bars), form input methods, touch target sizing, and content prioritization. With mobile traffic now dominating in many industries, these optimizations have become essential rather than optional.

Considered by many to be one of the seminal case-studies in AB testing, the 2008 Obama Campaign generated an additional $60 million in donations through two, simple tests. This case study showed the world how very small changes to user experience could have major impacts on the bottom line.

11. Future of AB Testing

AI and machine learning in testing are transforming how experiments are conceived, executed, and analyzed. Machine learning can identify patterns in user behavior that suggest test opportunities, predict which variations might perform best for different segments, and analyze results across multiple dimensions simultaneously. As these technologies mature, they enable more sophisticated personalization and testing at scale that would be impossible to manage manually.

Automation and continuous optimization represent the evolution from manual testing campaigns to systems that constantly refine the user experience. Rather than running discrete tests with fixed start and end dates, these approaches use algorithms to continually allocate traffic to better-performing variations, automatically test new ideas, and apply winning combinations to relevant segments. This moves testing from a project-based approach to an always-on system of improvement.

Privacy-first testing approaches are emerging in response to increased regulation and changing consumer expectations about data use. Future testing methodologies will likely rely less on individual user tracking and more on aggregate data, first-party data relationships, and contextual signals. This shift will require new technical implementations and potentially different statistical approaches for valid experimentation.

Emerging methodologies include innovations like intent-based testing (optimizing for user goals rather than company conversions), offline/online connected experimentation (linking digital tests to physical world outcomes), and cross-platform experience optimization. As digital experiences become more complex and distributed across devices and channels, testing approaches will evolve to measure and optimize these interconnected journeys.

12. Getting Started with AB Testing

First steps to implement a program include auditing current performance to identify optimization opportunities, selecting appropriate testing tools for your technical environment and traffic volume, establishing baseline metrics for key conversion points, and developing initial hypotheses based on both analytics data and qualitative research. Start with simple tests on high-traffic pages to build momentum before tackling more complex experiments.

Essential resources and communities provide ongoing education and support for testing practitioners. These include industry blogs like CXL, Optimizely's Knowledge Base, and VWO's resources; communities like Measure Slack and Growth Hackers; conferences such as Opticon and ConversionXL Live; and books like "A/B Testing: The Most Powerful Way to Turn Clicks Into Customers" and "You Should Test That." These resources help practitioners stay current with evolving best practices.

Building your testing team involves identifying the necessary skills and roles. Small organizations might start with a single growth marketer partnering with developers as needed, while larger programs might include dedicated conversion optimization specialists, UX researchers, designers, developers, data analysts, and a program manager. The structure depends on testing volume, technical complexity, and organizational size.

Measuring program success goes beyond individual test results to assess the overall impact of your testing program. Key metrics include win rate (percentage of tests producing statistically significant improvements), average impact per successful test, test velocity (number of tests executed per month), and most importantly, the cumulative business impact of implemented winners. Tracking both the direct ROI of the testing program and its broader influence on creating a data-driven culture provides a complete picture of value.

ezbot makes it simple to start testing as many ideas as possible, as soon as possible. Consider leaving a lot of the mental effort to AI, and focusing on the core needs of your business.